Netapprove distills everything it sees on the wire into a single A–F grade that executives and engineers can read the same way, recomputed every 60 seconds from live telemetry, not a stale audit from last quarter.

The model is subtractive: you start at 100 and lose points for every weakness the AI actually observes, an unpatched host, an anomalous identity, a segment that should be isolated but isn’t, traffic that violates policy. The grade reflects the network’s current posture, not its design intent.

Traditional NDR ships a giant pile of rules and asks you to tune them. Netapprove ships a learning system instead. It builds a statistical portrait of every host, every service, every peer relationship in your network, and the moment reality stops fitting the portrait, it raises a hand.

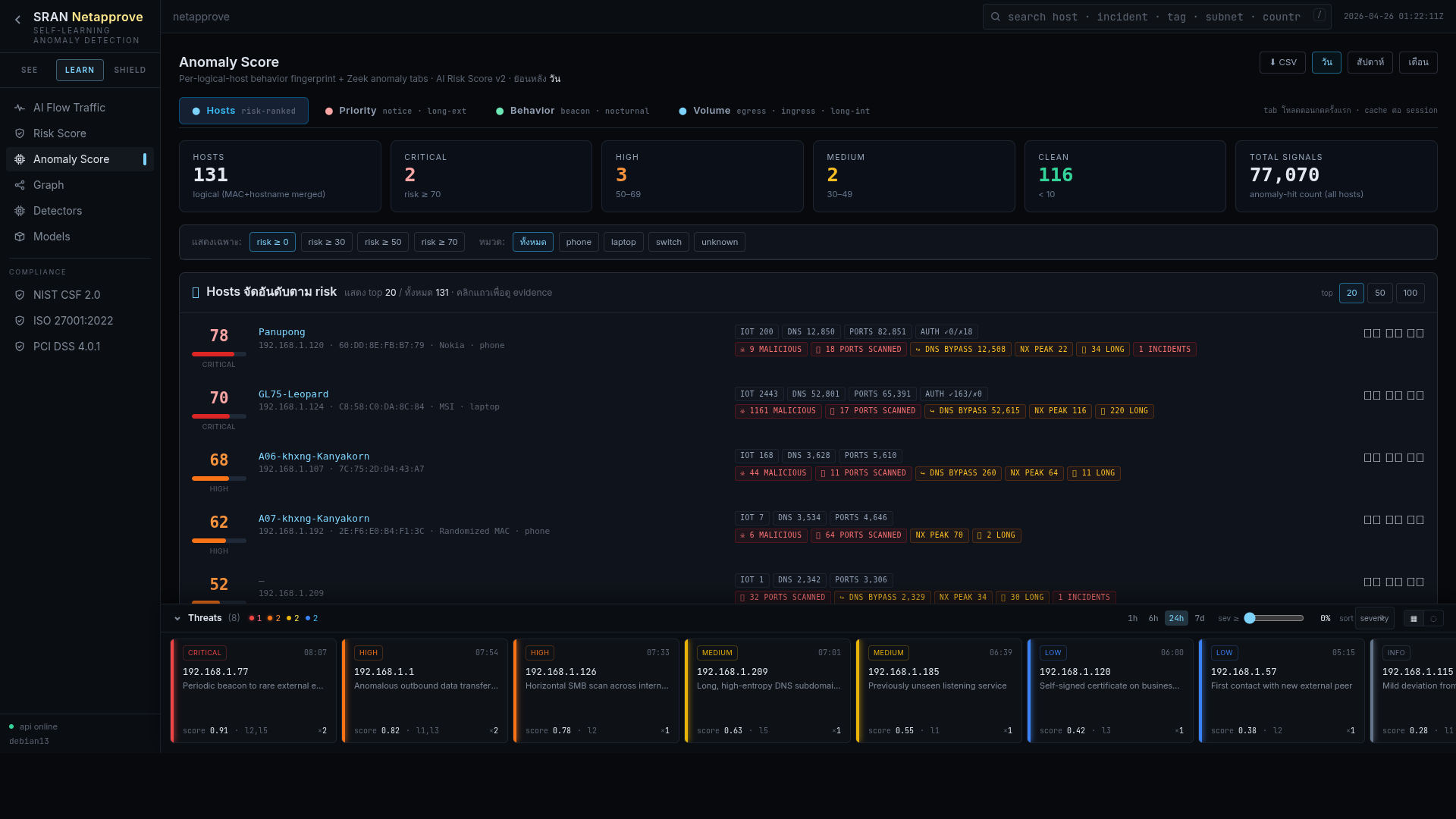

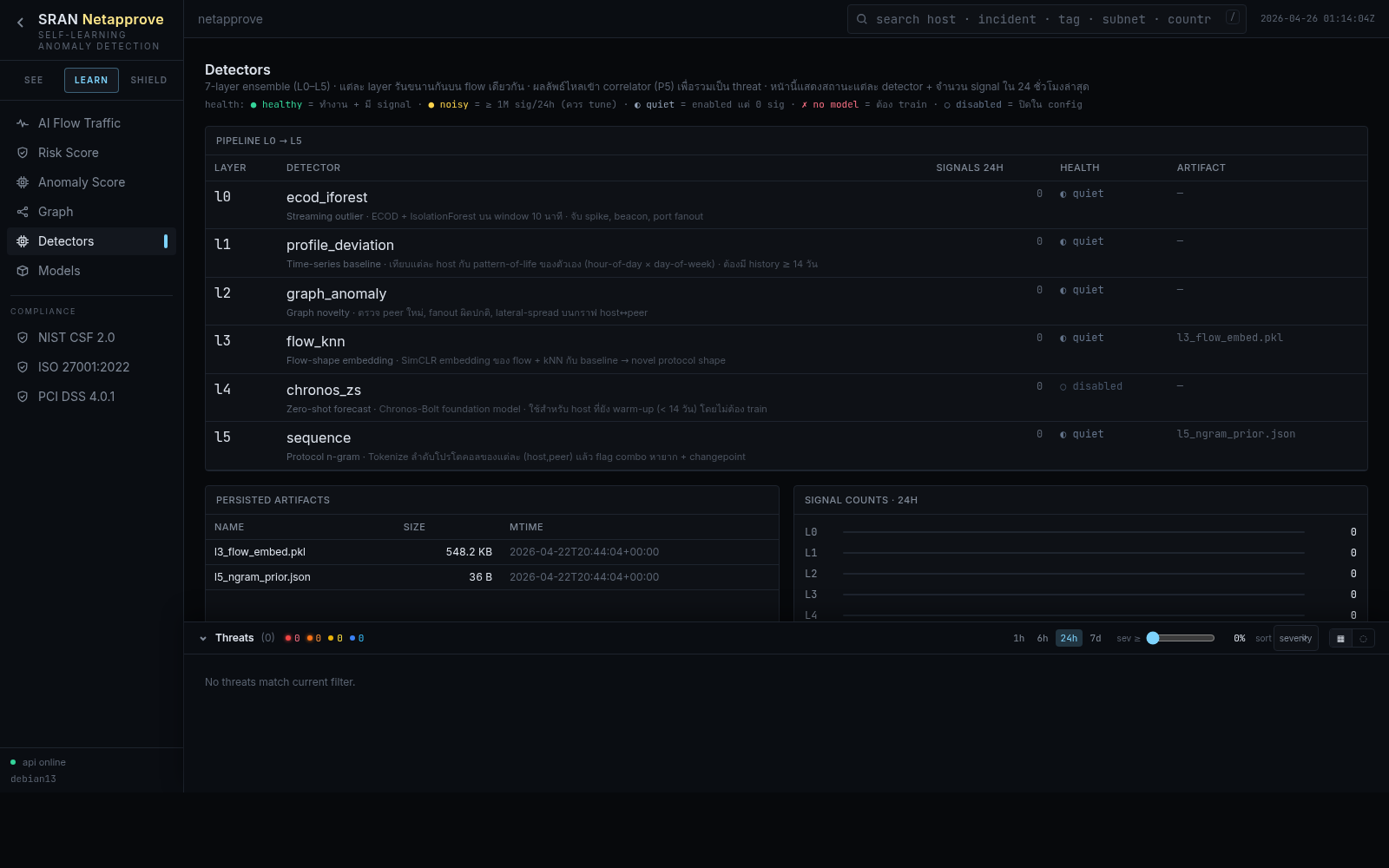

No single ML model can catch every shape of bad. Netapprove stacks seven, each watching a different facet of your network, then a meta-scorer fuses them.

Per-host model of "who you usually talk to". A new peer triggers a flag scaled by the host’s historical fan-out.

Per-host model of "what services you usually expose / consume". A previously unseen listener is a strong signal.

STL + isolation-forest on bytes, packets, and connection rate. Catches volumetric exfil and slow drips alike.

Encodes the order of protocol events; flags kill-chain progressions like recon → lateral → C2.

Spectral & community detection on the host-peer graph; surfaces rogue bridges & pivot nodes.

Per-account model of where, when, and how you log in. Impossible travel, off-hours bursts, golden tickets.

Continuous KEV / NVD / threat-intel ingestion enriches every external endpoint at scoring time.

A learned weighting that fuses the seven signals into a single calibrated incident score.

Drift detection retrains models on a sliding window so seasonal changes don’t keep alerting.

Every alert ships with a Claude-written narrative: what the host normally does, what it just did, why that’s unusual, what the most likely benign and malicious explanations are, and what to do next. Analysts spend their time deciding, not decoding.

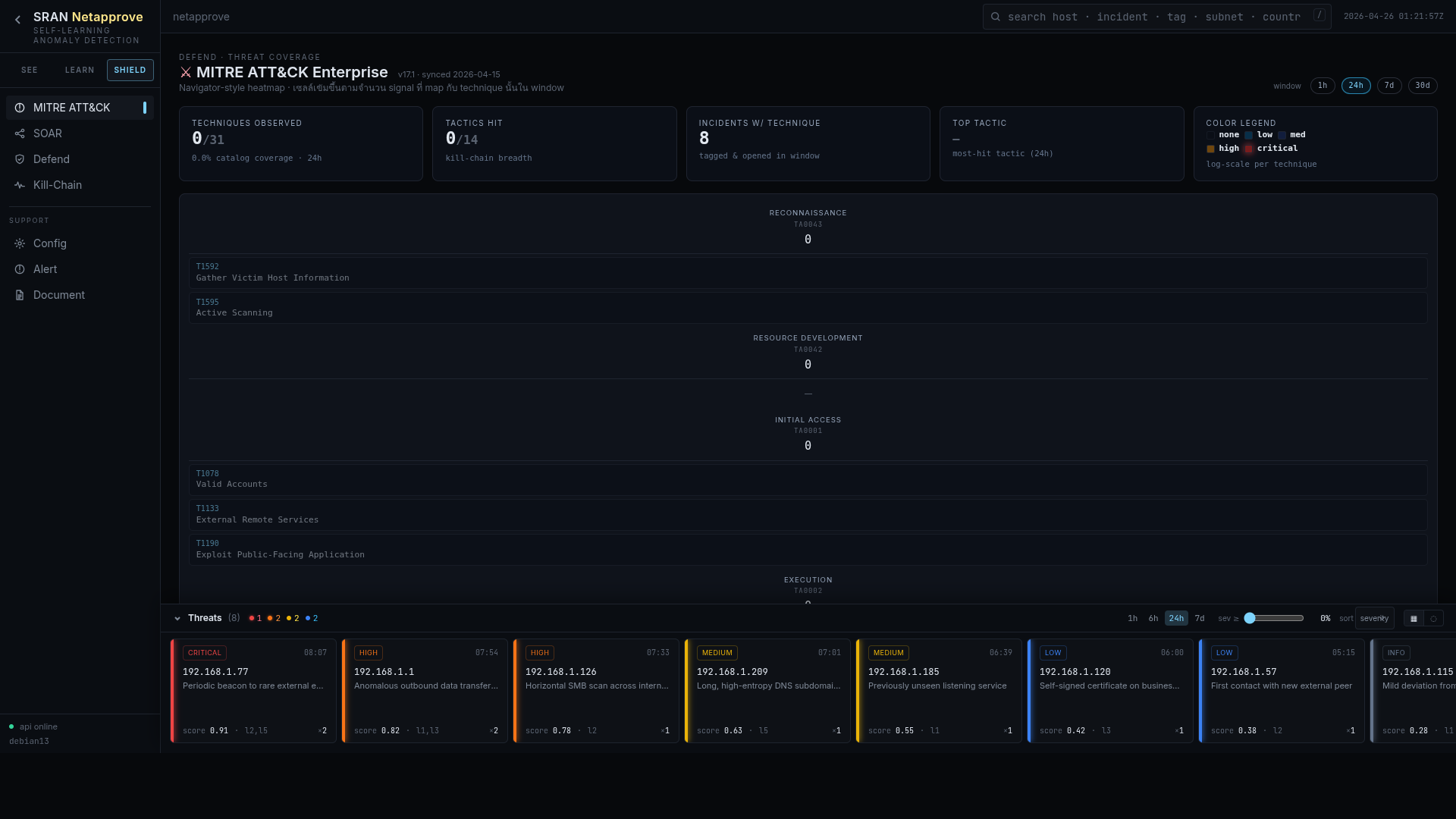

Every incident is auto-tagged with the techniques it most likely represents, surfaced as a coverage heatmap so you can see where you’re strong and where the AI is hungry for more telemetry.

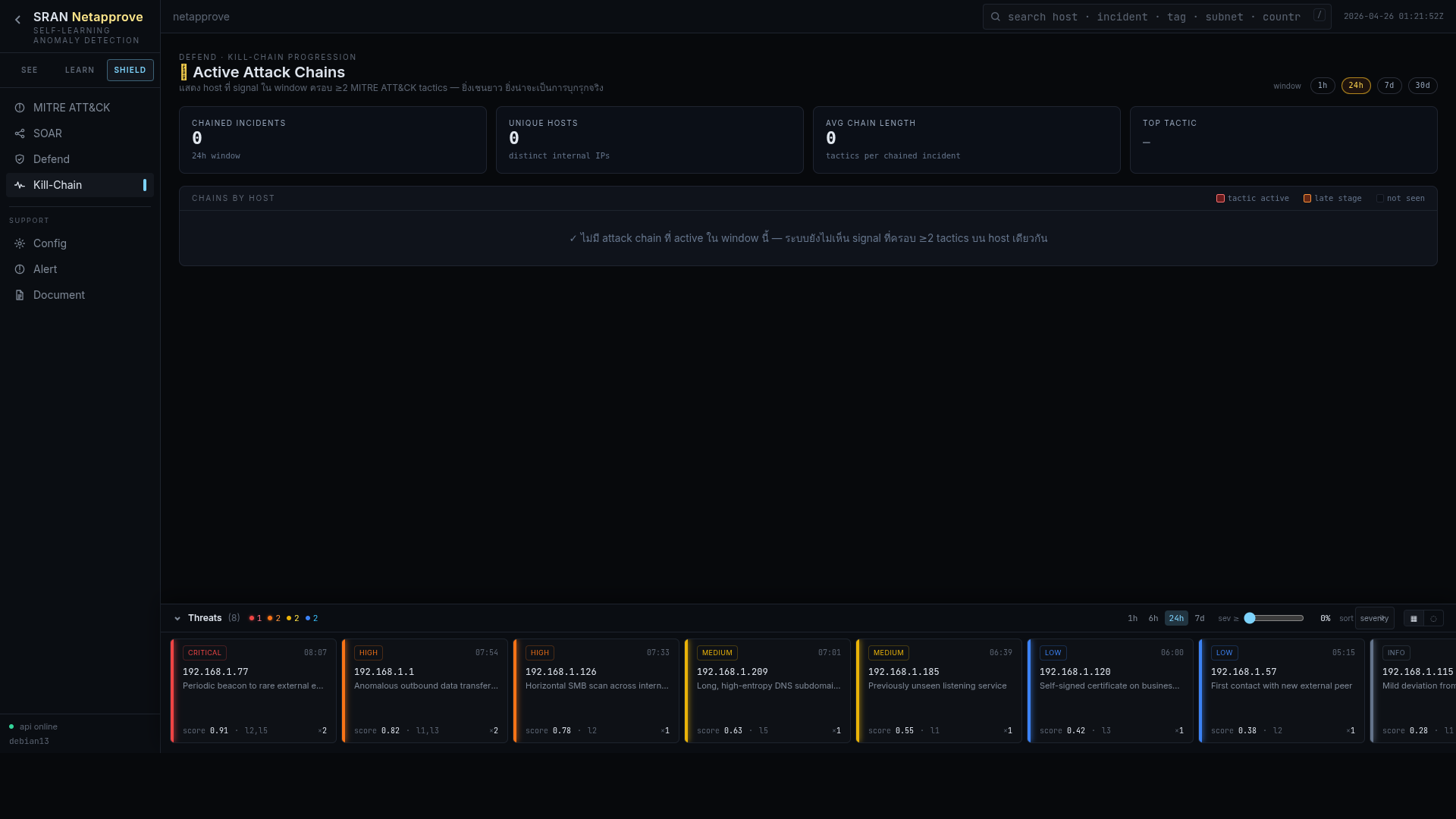

Individual events rarely tell the story; sequences do. Netapprove stitches related anomalies into a kill-chain timeline so a recon ping, a brute-force, and an exfil burst are read as one incident, not three.

The biggest cost of legacy NDR isn’t licensing, it’s analyst time burned on noise. Netapprove’s LLM triage loop re-reads every fresh alert against the host’s history and the last 24h of context. Confirmed-benign alerts auto-close with a written justification you can audit.

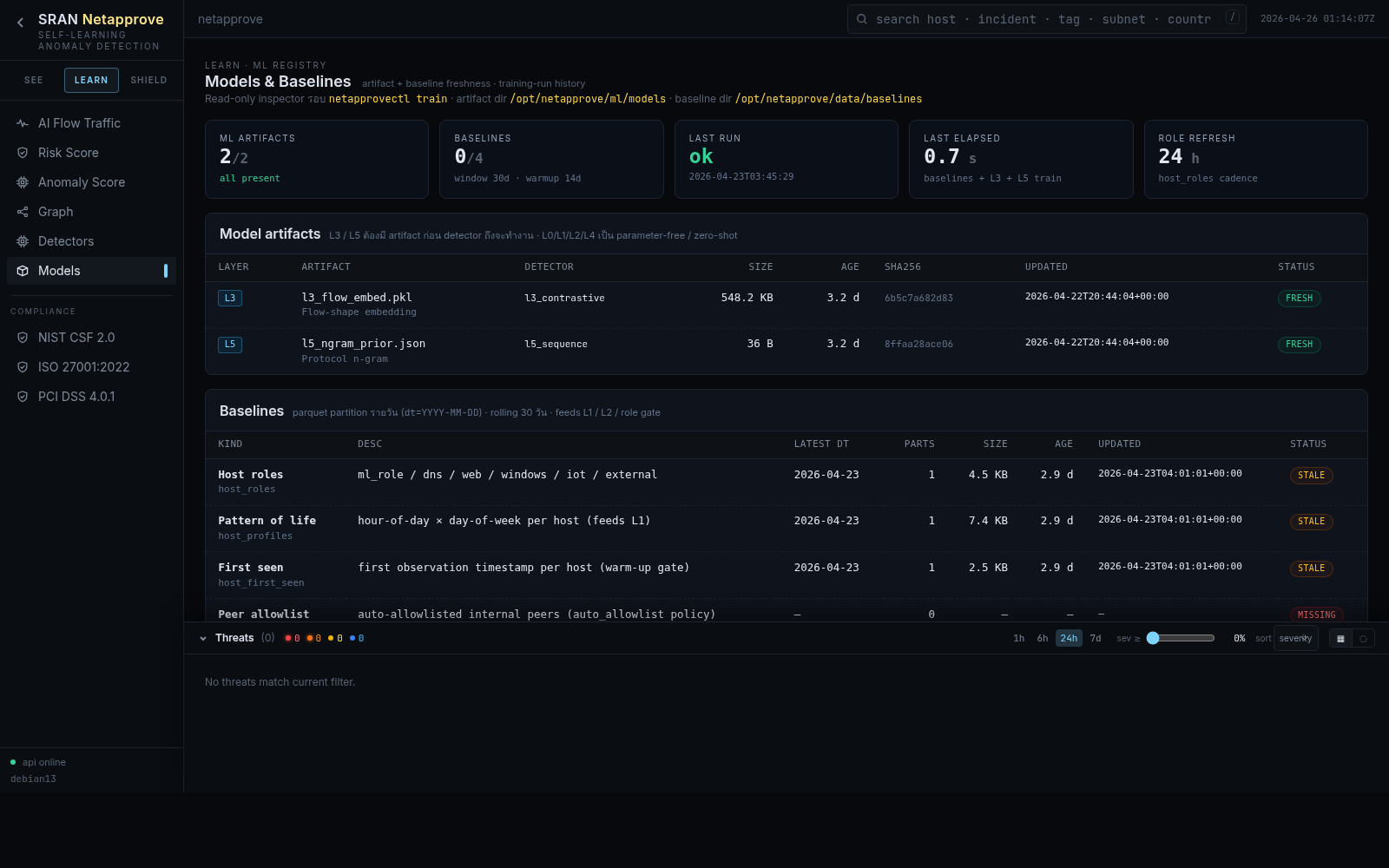

AI without auditability is a liability. The Models page exposes every active learner, its training window, drift status, hit rate, and current weight in the ensemble. No black boxes.